Blog - AI, Legal Tech, and the Hiring Gap Law Firms Didn’t Plan For

Most law firms now use AI for contract review, legal research, or document analysis. But walk into a partner meeting and ask who’s responsible when the AI screws up, and you’ll get silence. Not because people are incompetent but because nobody’s job description says “make sure the AI doesn’t hallucinate case citations” or “explain to the client why we let a chatbot touch their trade secrets.”

The problem isn’t the technology. It’s that firms bought software designed to change how work gets done, then hired nobody to manage that change.

The Three Complaints Partners Actually Make

When firm leaders say AI tools “aren’t delivering,” here’s what they mean in practice:

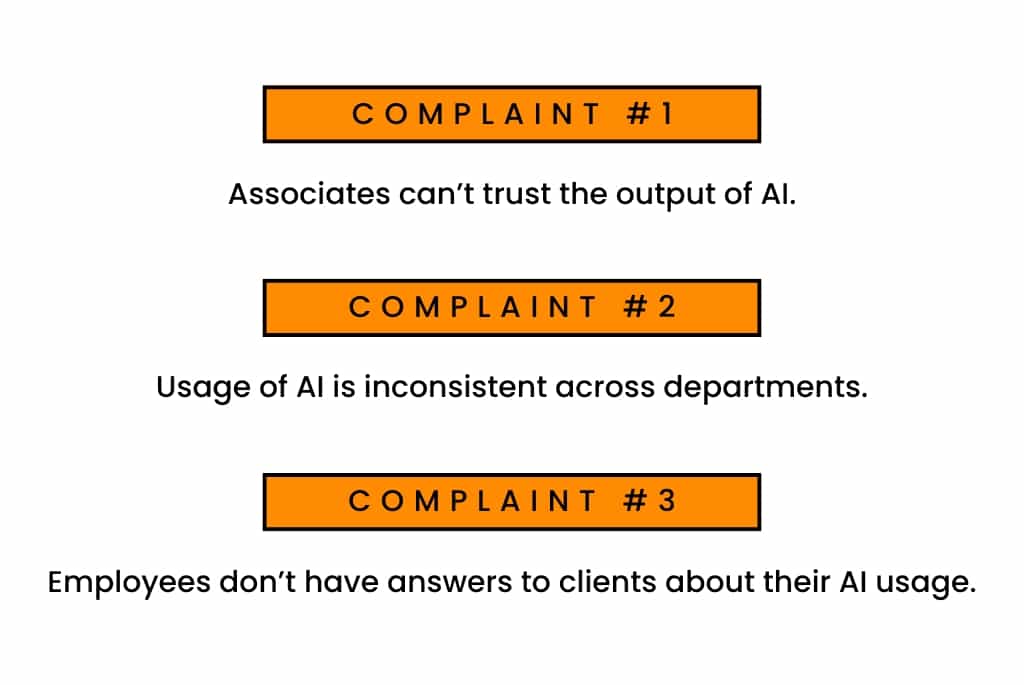

Associates don’t trust the output. They run AI-assisted research, then redo it manually because nobody taught them how to spot when the AI is right versus when it’s confidently wrong. You’re paying for the tool and the manual work.

The usage is chaos. The corporate team uses document review AI on every deal. The litigators won’t touch it. Nobody knows why, and there’s no one whose job it is to figure it out.

Clients ask basic questions nobody can answer. “Which of our matters involved AI?” “How do you validate AI research?” “Who reviews the output?” The answers are either “I don’t know” or “it depends.”

None of this is an AI problem. It’s an accountability problem.

Why Hiring Didn’t Keep Up

According to the American Bar Association, 30% of law firms used AI tools in 2024, up from 11% the year before. Among larger firms (100+ attorneys), nearly half reported using AI.

But job descriptions stayed the same:

- Associates bill hours and produce work product

- Paralegals handle documents and scheduling

- IT keeps the servers running

AI doesn’t fit that structure. Someone needs to set standards, validate outputs, train users, and answer client questions about risk. Most firms never decided who.

What the Gap Actually Looks Like

This isn’t a lawyer shortage. Law schools still graduate thousands of attorneys annually. The issue is that nobody trains lawyers to do what AI-era legal work requires:

- Evaluating whether AI-generated research missed controlling authority

- Writing prompts that produce usable contract summaries instead of garbage

- Knowing when automation creates more risk than it eliminates

- Explaining to a general counsel how you used AI on their matter without making them nervous

These skills fall between “practicing law” and “understanding technology.” Traditional legal hiring doesn’t account for that middle ground.

Where It Breaks Down

The gap hits hardest in operational roles:

Legal operations professionals who can analyze AI-enabled billing data and actually understand what it means for matter profitability. Most firms still treat legal ops as optional overhead.

Paralegals with AI platform experience who know the difference between an AI tool working and an AI tool appearing to work. These people are getting poached by corporate legal departments that figured this out faster.

Practice managers who can enforce consistent AI usage across teams. Right now, every practice group does their own thing, and nobody has the authority to impose standards.

The 2024 Legal Department Operations Index from Thomson Reuters Institute found that corporate legal teams are prioritizing technology workflow improvements and expanding legal ops roles. Law firms are losing talent to clients who take this seriously.

Why Training Doesn’t Fix It

Firms respond with training sessions. An hour-long demo on how to use the new research tool.

Training explains features but it doesn’t create accountability. It doesn’t resolve arguments about when AI is appropriate. It doesn’t establish who checks the work.

When nobody owns the outcome, problems accumulate quietly until they surface in a client complaint or a malpractice scare.

What Good Firms Are Doing Differently

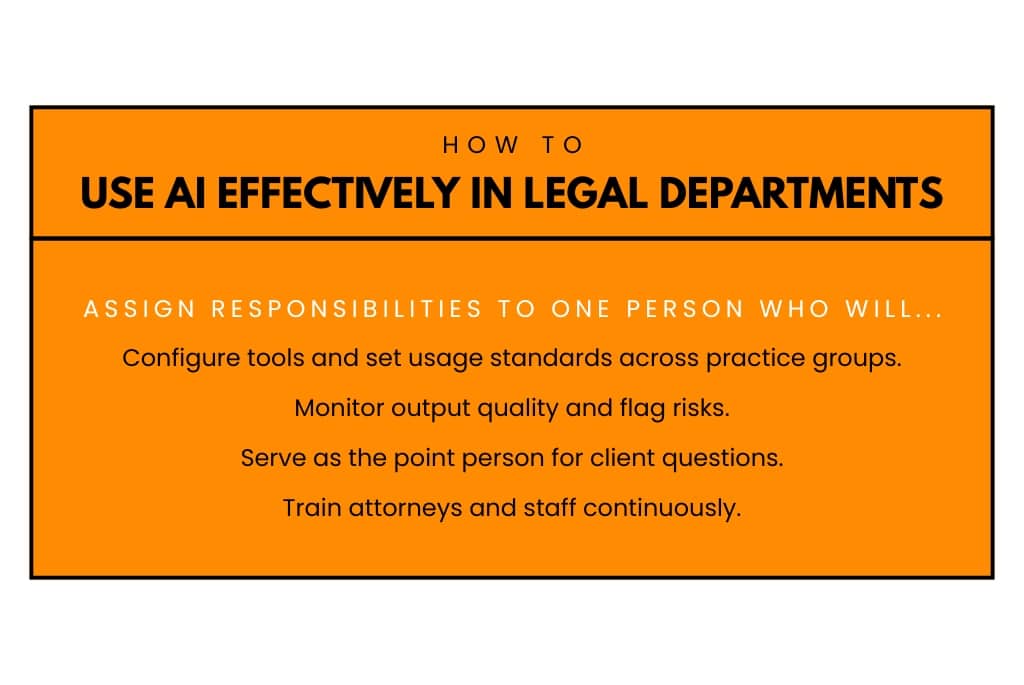

Firms that report actual results from AI tools assign clear ownership. Sometimes through a formal title, more often by explicitly adding responsibilities to someone’s role:

- Configure tools and set usage standards across practice groups

- Monitor output quality and flag risks

- Serve as the point person for client questions

- Train attorneys and staff continuously

This isn’t an IT role (they don’t understand legal risk). It isn’t a practicing attorney role (they don’t have time and often lack tech fluency). It’s an operational role that requires both legal judgment and technical competence.

Firms that wait for this talent to emerge organically usually wait too long.

The Associate Training Problem Nobody Talks About

AI changes how junior lawyers learn. Previous generations built judgment through the repetition of drafting fifty discovery responses and learning what works. AI collapses that volume. Associates now edit machine-generated drafts instead of writing from scratch.

Faster? Yes. Better training? Unclear.

Some firms are deliberately reserving certain work for manual completion just to preserve training value. Others are ignoring this entirely and may end up with mid-level attorneys who’ve never built a contract from scratch.

Clients Are Starting to Ask Harder Questions

Corporate clients care less about whether you’re using AI and more about whether you can explain its use without creating confidentiality or privilege risks.

Thomson Reuters’ 2025 report found that 57% of clients want their law firms using generative AI, but 71% don’t know if their outside counsel actually is. That gap creates pressure: clients want efficiency but also want answers about where AI touches their matters.

The Association of Corporate Counsel published sample AI guidelines for outside counsel that include disclosure and control requirements. This is clients formalizing expectations, not making polite inquiries.

When firms can’t answer clearly, trust erodes. This shows up in RFPs, audits, and renewals.

What to Do Instead of Buying More Software

Before investing in another AI tool, answer these:

- Who owns outcomes after implementation? Not who clicks “install” but who’s accountable when it produces bad work?

- How is output quality monitored? Hoping associates catch errors isn’t a system.

- Which roles need hybrid skills? And are you hiring for them or hoping existing staff figure it out?

Until you have clear answers, more software won’t help.

The Uncomfortable Truth

Legal tech will keep advancing whether law firms adapt their hiring or not. AI capabilities will expand. Vendors will improve their tools. Client scrutiny will increase.

Firms that treat legal tech as “just software” will fall behind; not dramatically, but through accumulated inefficiency. The waste won’t be obvious. It’ll show up as underutilized licenses, inconsistent quality, and associates spending Friday nights redoing work the AI already did.

Mid-sized firms face the highest risk. Large firms can absorb inefficiency. Small firms can stay simple. Mid-sized firms adopt aggressively without building the internal capability to make it work.

The firms that succeed will be the ones that stopped assuming every technology problem can be solved by lawyers alone.

Tyler is the SEO & Marketing Associate for The Richmond Group USA and it's sister companies. In his day-to-day work, Tyler is busy creating informative blog posts and case studies that educate our audience on the work we do and the affect it has on our clients.